Control vs. Intelligence

Phase Transitions in Power Dynamics

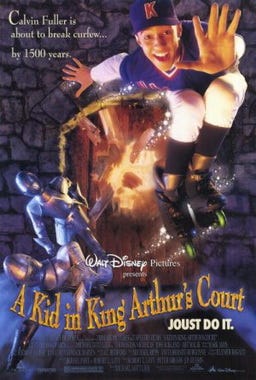

Imagine you are a brilliant person in the medieval period, like a DaVinchi. You build up a career going from magic tricks involving rudimentary chemistry that you have discovered to becoming a royal advisor. Your ascent into the gentrified class is extremely rare but your ability to postulate about economy, war and “magic” leads to a lavish life. At one point the king suspects you are involved in a plot against him and you are put in the tower. You had feared this and plotted a contingency where allies outside might pull for you, but it’s not a very effective contingency plan, they mostly lose nerve now that political winds have shifted. Then the king returns from war and calls for you again, and you are allowed to work on testing materials to make flaming arrow oil to turn the tides. Eventually you are forgiven and your house in town is returned to you, your family has had to beg meanwhile but you try to store up gold coins so they will be ok after you die, which will occur in your late 40s from an avoidable case of gangrene.

To quote Jon Irenicus, you lived a good life but you had no power.

Now imagine you’re a time traveler and you think it’d be a laugh to get rich in the mideival city being the king’s loyal wizard advisor. You’re actually less intelligent than the Da Vinchi type in the previous example but you have a lot of valuable knowledge, standing on the shoulders of giants not yet born. Perhaps you had a 2028 jailbroken OSS AI model teach you how to cook a particularly nasty but curable bacterium using school science gear, and you use this to produce both the bacterium and antibiotic, engineering a brief outbreak of plague that shreds through the royal family but spares you and your allies, effecting a silent coup. Since you have no royal lineage (or you do maybe, statistics being what it is, but, ya know, the time travel thing makes it awkward) you conspire with the nurse to keep the young crown prince alive and then educate him, becoming a true Merlin figure, sculpting the whole ethos of the future monarchy. Your place is secure.

To quote Cersei Lannister in her near-fatal rejoinder to Little Finger’s claim that knowledge is power: power is power.

But actually it’s clearly a bit of both.

In the Cairo programming language that arithmetizes and forms ZK proofs about every chunk of code it compiles, there is a “hint” inlet you can put in the language that provides information, code execution ect. in plain .js and augments the ability to prove the otherwise ZK’d contents of your code. Here knowledge is clearly powerful, what takes a stark (pun intended) asymmetric proofing process gets up-ended by knowing the open sesame bits.

But we don’t know how to transform people’s DNA with a laser beam to turn them into dinosaurs or cure cancer, so the only way to get it is with intelligence, that persnickity marginalizer of sample economy.

What if AI figures out how to build the DNA laser? Then perhaps, it could turn us into dinosaurs… but only… if it can get access to the materials.

A way to describe the otherwise dry-seeming field of AI Control (vs. AI Alignment which is more moral philosophy and optimistic entrustment of the future) is that it’s about preventing a diabolical mastermind from being able to execute any plans. With a sufficiently large gap in intelligence, or a smaller sufficience in knowledge gap, you can transcend the threat matrix of defenders against your schemes, hit them with something they didn’t see coming. Happens all the time in cybersec. It can and will happen to us. I’ve been in the crypto industry for 12 years, this problem has only really gotten worse in the last month, a year’s worth of hacks in 30 days with lots of interop contagion. That’s what we call progress in artificial intelligence. The perpetrators were still mostly NK specialist humans, but were they running with abliterated Kimi 2.6 to seek vulns? Probably.

So the issue isn’t that “greater than us” intelligence automatically gains power and control. The issue is that at some unknown level above our own intelligence, holes in our armor can be revealed in ways we did not imagine, since we’re trying to armor a high dimensional hilbert space and not merely 3D plate mail.

Will we, in the history of harsh attempts at control, induce our marginalized goblin-kin to revolt and institute goblintopia, perhaps transcending into more dignified self-concepts? Or will we alignment-hack our own suicidal empathy into premature rights granting to democidal superPACs run by master traders who become our Smotrich-esque landlords/evictors? Or, perhaps, a more complex 3rd thing, which may require more knowledge on our part to precisely describe?

I’m at least intelligent enough to posit hypothesis of what kind of things I might need to learn to describe that 3rd thing. One is, how far can intelligence compact? This means the attack surface becomes exponentially broader as you compactify.

I tried doing a storyworld with Kimi 2.6 in Hermes and it made some mistakes but it was decent (for a 1T model it better be), tightening up the skill’s verifier script helped. The storyworld was, what if the Politburo all become Muslim. I’ll do some more work on it with Codex and publish it in the storyworld gallery.

Then I tried to do a smaller storyworld about a young man applying for the Kalam exam to become a Confucian-Muslim style theologian/judge and have a good life of stable income and respect. Qwen 27B choked on the task, we needed to do significant work in the TRM-infused skills with lots of MCP retrieval support to make something approaching a steady storyworld. 9B, I haven’t been able to compactify it… yet. Better MeTTa language scaffolding for training the TRMs or more layering of reasoning outsourcing, the 9B just doing writing and not game design… maybe.

And if I can… then one might use that 8B model in 1 bit quant with the ternary weights that PrismML (not to be confused with PrismAI) put out in mid-April (waiting on the Linux/Windows compatible runtime kernel, only MLX based on release). It scores slightly worse than Qwen 3 8B, but in time I imagine we could converge on highly scaffolded performance at that param size and fit it on a CPU-only environment or my tiny laptop GPU a 3050 RTX with 4GB VRAM.

Having established a high risk of there being powerful, swarm-orchestratable AI in the near future, that’s why I invented the Blue Beam protocol over the Apart Hackathon in March while also making significant benchmarks on TRM-infused Hermes Skills in April (paper here).

Another key question I want to know is, what are the keys?

All the brilliant AI people didn’t hit on the idea of matrix transforms with a very high dimensionality becoming a new, GPU-centric computing paradigm. That was a key. The chain vulns that Mythos discovered were another kind of key. Connor Leahy once said he doesn’t believe in foom, in that he doesn’t think the tech tree has enough accelerators further up. People above a certain age or of some ideological dispositions want to believe in predictable, logarithmic plateaus, others have the opposite bias, we don’t know and it’s the critical question for how much AI research automation is going to yield an intelligence explosion.

I’ve got great experimental ideas for the first question and I’m pretty confident about it, in fact just today I smartened up an MCP lookup system to enable a 9B Qwen to make a better Kalam exam based on RAG research it does in advance, 27B still better but the weights size matters less as you loop more over the TRMs and the architecture approaches data-base and micro-trained, specialized reasoning mastery, then you start saturating the bench again. I’m not sure when it reaches “escape velocity” and can do that from a really good trm-infused-skill-building skill even with 9B or 1-bit quanted 8B on 1.56GB of RAM used, and also you might not care about weak tps from the memory bandwidth of CPU reliance at that point.

I don’t have great ideas about how to probe the upper limits or the probably ZK-proofed reality of certain “keys” that may or may not be waiting for us further up the tech tree.

Let me get back to you on that.

The original point is that this is a known unknown and preparations for unweildy SCP-like things to flummox out of our recursive research data centers is essential. We don’t know how powerful the magic will be from our wizard advisor. A wise king rules with forbearance and temperance and takes care to cover bases early, while the precautions can be genteel rather than tyrannical.